Have you ever experienced the cases when you can’t find your website on Google?

You have all chances to see this happen if your website is a new-born one. However, nobody says that your website is safe if it is on a more advanced stage of its life cycle.

Therefore, you must be immune to this negative experience, unless you’re prepared to sell your online business.

Likely, you will find out seven evident reasons why your website can’t be found on Google. And get solutions on how to fix these issues.

Let’s start!

-

Google Isn’t Familiar With Your Website Yet

Let’s say you have just launched your website. It is completely new and Google hasn’t a chance to draw attention to it. Likely, it won’t last long. Google will notice your website for sure. You will need to wait a bit.

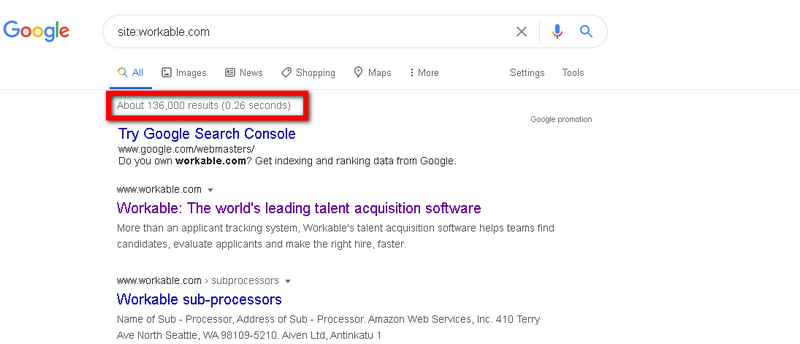

It isn’t hard to check out if your website is under Google’s radar. Use this command “site:yourwebsitedomain.com” in the search:

From the example above, you can see that Google knows about this website and it revealed the results in o.26 seconds only.

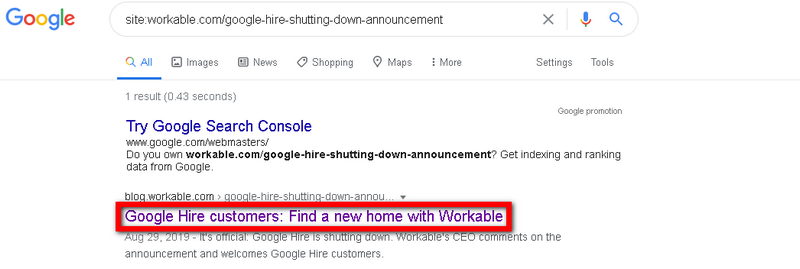

Nevertheless, there are cases when a search engine can’t find a specific page on your website. In order to see if Google can’t find the page you’re trying to rank, you should use the following command “site:websitedomain.com/page-per-your-request”:

To make sure that Google will see all the pages on your website properly, you must create a sitemap and submit it using Google Search Console.

-

You Might Block Search Engines From Crawling Your Web-Resource

There is nothing strange if a website owner wants to hide some pages from Google. It isn’t difficult to do by implementing a “noindex” meta tag that is actually a piece of HTML code that has this structure:

<meta name=”robots” content=”noindex”/>

As a result, all the pages tagged as “noindex” won’t be indexed by Google (even if your sitemap has been submitted in Google Search Console.)

For your information, sometimes web-developers use the “noindex” meta tag on the early stage of a website developing. It helps prevent Google from indexing a website. Consequently, the developers might forget to remove this tag before the website’s release.

Likely, if you review a “Coverage” report in Google Search Console, you will be able to see every page that has been tagged as “noindex.”

Review this report carefully and see if there are pages that need to be fixed in terms of removing the “noindex” tag. It will help search engines to crawl your website organically.

-

The Pages Are Blocked From Crawling

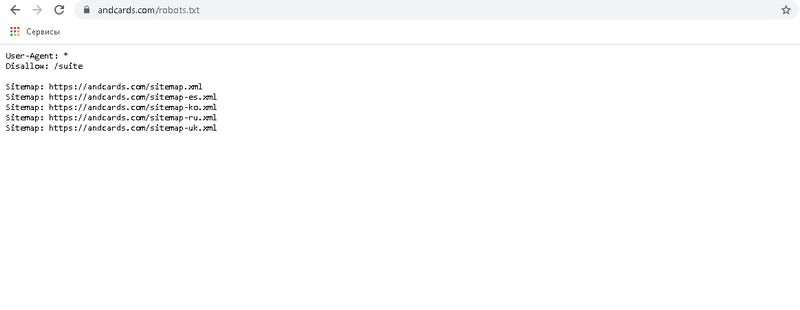

It is important to let search engines know what pages they can crawl and can not. To do this you should apply a robot.txt file on your site. Those URLs that you have blocked in your robot.txt file, won’t be noticed and crawled by Google.

If you want to figure out what pages are blocked from the crawlers, you should review “submitted URL blocked by robots.txt” issues that you can find in the “Coverage” report within Google Search Console.

You should remember that this information is available if Google tried to crawl the URLs submitted in the sitemap. Despite the fact, you can check these pages manually by using the command “yourdomain.com/robots.txt”:

A piece of code that blocks Google from crawling the pages on your website is “Disallow: /”. Therefore, if you see this code under any of these user-agents (User-agent: * or User-agent: Googlebot), it will block the pages from crawling.

-

Lack of Authoritative Backlinks

You know that Google uses different ranking factors that help identify what websites deserve to be ranked high. The number of authoritative backlinks a website has is a ranking factor as well.

Any backlink works as a kind of a vote for a specific page on the website. The more “votes” the page has, the higher it will be ranked on Google.

As you already guessed, if your pages lack authoritative backlinks, they might not be noticed by Google.

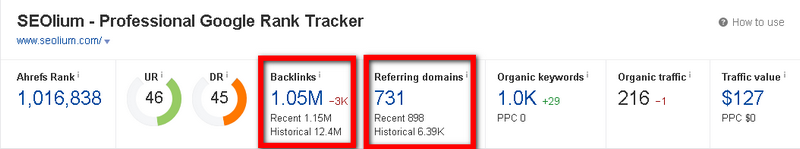

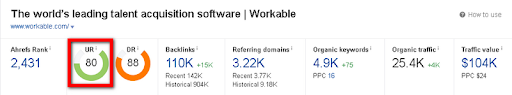

To keep an eye on the backlinks your website has, you can use the Site Explorer tool from Ahrefs. This tool provides you with a range of data but you should be interested in two metrics mostly – “Backlinks” and “Referring domains”:

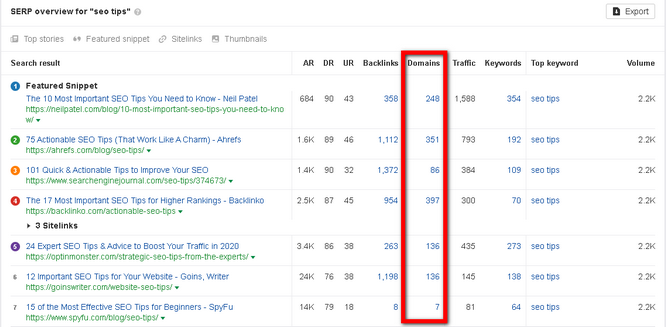

If you need the exact information on how many referring domains you need to get to rank on Google’s first page, you should use “SERP overview” report in the Keywords Explorer tool:

Pay attention to a “Domains” column in the report. It will help you calculate the number of referring domains you’re lacking.

-

The Pages on Your Website Lack Authoritative Backlinks

Talking about Google’s ranking algorithm, we should never forget such an aspect as PageRank. According to the definition given by Search Engine Land, PageRank is Google’s system of counting link votes and determining which pages are most important based on them. These scores are then used along with many other things to determine if a page will rank well in a search.

It should be noticed that this score was canceled a few years ago. Therefore, there is no sense in comparing a PageRank of your existing pages on the site with the results in the SERP.

Fortunately, you can get this data by reviewing the URL Rating score available in the Site Explorer:

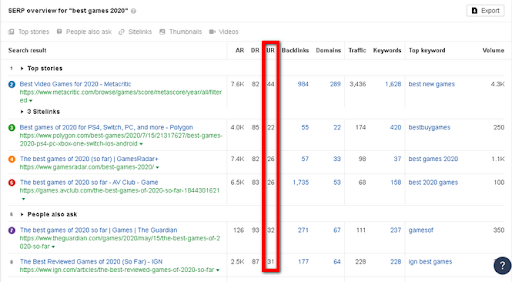

Moreover, you can compare a URL rating of your site with the top-pages by reviewing the “SERP overview” report in the Keywords Explorer tool:

To boost a page’s UR score, you will need to build more backlinks to this page. And don’t forget about internal linking as well.

Tip: Consider submitting your images to image submission sites for high-quality do-follow links.

-

You Didn’t Optimize Your Pages for Search Intent

A primal goal of Google is to suggest people those results that are related to their queries most of all. Consequently, your purpose is to produce content that would be optimized for your target audience’s search intent.

Basically, there are four types of search intent you should know about:

- Transactional (your target audience wants to buy something)

- Navigational (your target audience searches for a specific site)

- Commercial investigation (your target audience is searching for some product but hasn’t bought it yet)

- Informational (your target audience searches for some information)

Keep in mind that you must optimize your content with those keywords that follow your target audience’s search intent. Otherwise, your page won’t rank on the first page.

Tip: A good SEO platform can make all of this a lot easier for you. They don’t come cheap but you can always try most of them out for free like this SEMrush 14-day free trial. Alternatively, you can also use free SEO tools or check out various SEO resources online for tips and tricks that can help improve your site’s rank.

-

Check Out If Your Website Hasn’t Got a Penalty From Google

If your website was penalized by Google, it might end up not finding your site in the search. But this possibility is quite small. Let’s review the cases when these penalties might be the reason why you can’t find your website on Google.

There are two types of penalties – manual and algorithmic. A manual penalty happens when Google removes your site from the search by taking some radical actions. On the other hand, an algorithmic penalty happens when Google surpasses your pages in the search because of some quality issues.

It should be admitted that it is rather a rare case when your site might be penalized manually. Even if you want to make sure you don’t have any manual penalty from Google, you can check the “Manual Penalties” tab in Google Search Console.

Unfortunately, you will never know that your website was penalized algorithmically via notification. The first signal that your website has been penalized by the algorithmic penalty is a sudden drop in traffic on your site.

To End up

You can never predict if you will have any reason that would make your website hidden from Google.

Hence, it is critically important to know the possible issues that might appear on your website’s way. Now you are familiar with seven reasons that you must keep your eye on.

Research these possible issues and you won’t have any difficulties in finding your web-resource in the search.

Author’s bio: Sergey Aliokhin is a Marketing Manager at andcards. Apart from exploring different marketing and SEO things he likes reading, playing the bass, and studying martial arts.

Featured photo by Morning Brew on Unsplash